You’re probably familiar with the way good sound design can bring a game or video to life. It can take huge teams of creators hour upon hour to make the audio just right, but almost no amount of time is enough to craft the perfect audio for a virtual reality experience. Sound design has been vastly simplified because of the innate unscripted nature of VR simulations, but a new algorithm from researchers at Stanford could finally change that.

In scripted media like a pre-rendered 2D video, you always know where sound should come from — the audio levels for each channel never change from one viewing to the next. Even a 3D game has a workable level of complexity thanks to the predetermined parameters of the environment. With VR, there are simply too many variables to create perfect, realistic sound from every perspective.

Currently, the algorithms for creating sound models come from work done more than a century ago by scientist Hermann von Helmholtz. In the late 19th century, Helmholtz devised some of the theoretical underpinnings of wave propagation. The so-called Helmholtz Equation has since become a major component of audio modeling along with the boundary element method (BEM).

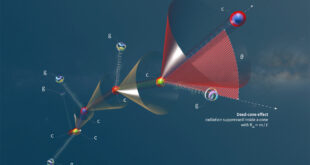

That’s all well and good if you’re dealing with an environment without too many variables. Virtual reality ratchets up the possible audio models to previously unheard of levels. To make VR sound authentic, engineers would need to create sound models based on where the viewer is standing in the virtual world and what they’re looking at. Doing that with the Helmholtz Equation and BEM would take powerful computers multiple hours. So, far from practical.

The potential solution comes from Stanford professor Doug James and graduate student Jui-Hsien Wang. The new GPU-accelerated algorithm calculates sound models thousands of times faster by completely avoiding the Helmholtz Equation and BEM. We’re talking seconds of processing instead of hours.

The pair’s approach borrows from 20th-century Austrian composer Fritz Heinrich Klein, who found a way to generate the “Mother Chord” from multiple piano notes. They call their algorithm KleinPAT in recognition of his posthumous contribution. The video above includes some comparisons between Helmholtz-generated sound models and KleinPAT. They sound very similar, which is the point. You can get almost identical sound from KleinPAT with much less computing time. The researchers believe this algorithm could be a game-changer for simulating audio in dynamic 3D environments.

Now read:

- VR vs. AR vs. MR: What Is Each One Good for?

- Sony, Microsoft Join Forces in Cloud Gaming and AI

- HTC Vive Focus Plus VR Headset Launches April 15 for $799

#Bizwhiznetwork.com Innovation ΛI |Technology News

#Bizwhiznetwork.com Innovation ΛI |Technology News